On March 11th, I had the privilege to attend and present at EdTechTeacher’s AI in EDU Summit at Bentley University in Massachusetts. It’s been quite some time since I’ve presented in person at a conference, and let me tell you, I’ve certainly missed the adrenaline rush and camaraderie of in-person back-and-forth conversations.

The day was a whirlwind, and there was so much to take in! I wish there was a way for me to clone myself because I wanted to attend everything. But since that wasn’t an option, I can only provide a limited review of my time there. So I hope that I do justice to the many amazing educators I had the chance to talk with and learn from.

Here are my take-aways from the day:

- Schools and districts need an AI Policy. More importantly, this policy needs to be crafted with the voices of all stakeholders AND be flexible enough to be revised as AI platforms and capabilities change over time.

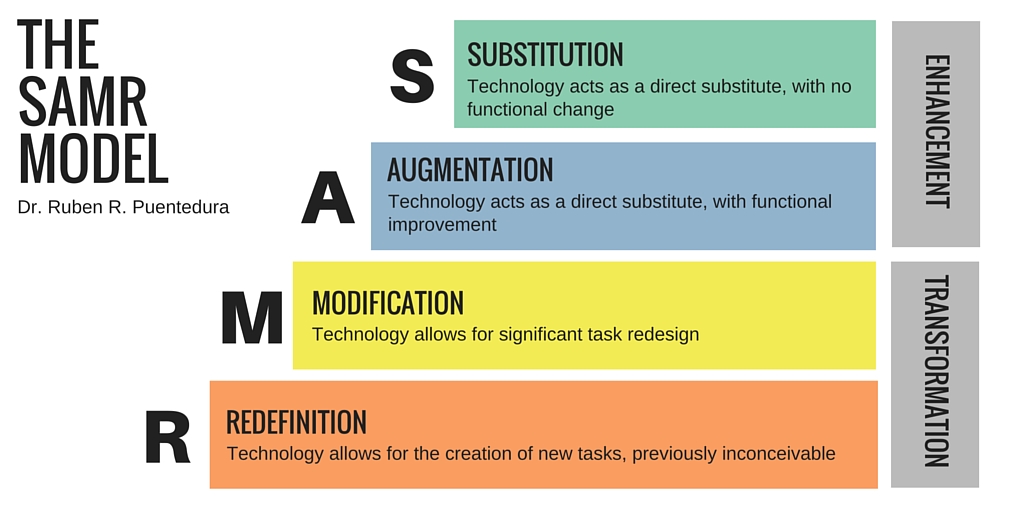

- Student learning outcomes need to drive AI use. Aside from the many useful, innovative, and enlightening uses of AI in the classroom for teaching and learning, it is important to make sure that learning objectives drive the what, how, and why of technology integration. It’s equally imperative that educators look at student learning to see to what extent AI is helping students to engage in deeper learning as opposed to superficial knowledge.

- Be curious and not judgmental. Okay, I totally stole that from Ted Lasso. But it’s true. AI tools can evoke a sense of excitement but also dread — sometimes both at the same time. I encourage everyone to be open to the possibilities of how AI can help level the playing field for teachers and students across demographics, regions, and socio-economic levels. I acknowledge that there are legitimate concerns with using AI (first and foremost, student privacy), but let’s begin these conversations of AI use with an open mind and sense of wonder.

- Don’t be afraid to pivot. I can’t be the only one who was thinking one way about AI (in general) or a particular AI tool only to change my mind when more information became available. With the many AI tools and platforms available (and emerging), it is very likely that your opinion on the value of that tool for teaching and learning will change with product updates, regular use, and/or shifting priorities for student learning. My advice? Keep the conversations flowing and recognize that opinions will fluctuate with the ever-changing landscape and reach of AI.

There are so many different perspectives when it comes to AI use and the projected impact of AI for teaching and learning. But what I keep coming back to is the fact AI is a tool. It’s a medium for learning. It’s not a silver bullet. What is going to move the needle in terms of improving student outcomes is creating meaningful learning experiences grounded in research-based best practices with measurable metrics. Period.

References

Driscoll, T., & McCusker. S. (2024). Crafting AI policy for schools: A step-by-step guide [Webinar]. https://youtu.be/lxu1WS5ojzs?si=_QCot72pDDfe5xB7

Holland, B. (2025). Is it working? Building an evidence base to inform AI initiatives [Presentation]. AI in EDU Summit. Bentley University, MA.